Designed to shape the future, xLSTM turns back the years. xLSTM revives the Long Short-Term Memory (LSTM) idea, i.e., concept of constant error carousel and gating. Introduced by Sepp Hochreiter and Jürgen Schmidhuber LSTM is a revolutionary 1990s Deep Learning architecture that managed to overcome the vanishing gradient problem for sequential tasks such as time series or language modeling. Since then, LSTM has stood the test of time and contributed to numerous Deep Learning success stories, in particular they constituted the first Large Language Models (LLMs). However, the advent of the Transformer technology with parallelizable self-attention at its core marked the dawn of a new era, outpacing LSTM at scale.

NXAI is funded to raise a simple question: How far do we get in language modeling when scaling LSTMs to billions of parameters, leveraging the latest techniques from modern LLMs?

To answer this question, our team lead by Sepp Hochreiter had to overcome well-known limitations of LSTM, namely the inability to revise storage decisions, limited storage capacities, and lack of parallelizability. In this vein, our team introduces exponential gating with appropriate normalization and stabilization techniques. Secondly, our team equips xLSTM with new memory structures, and consequently obtain: (i) sLSTM with a scalar memory, a scalar update, and new memory mixing, (ii) mLSTM that is fully parallelizable with a matrix memory and a covariance update rule. Integrating these LSTM extensions into residual block backbones yields xLSTM blocks that are then residually stacked into xLSTM architectures.

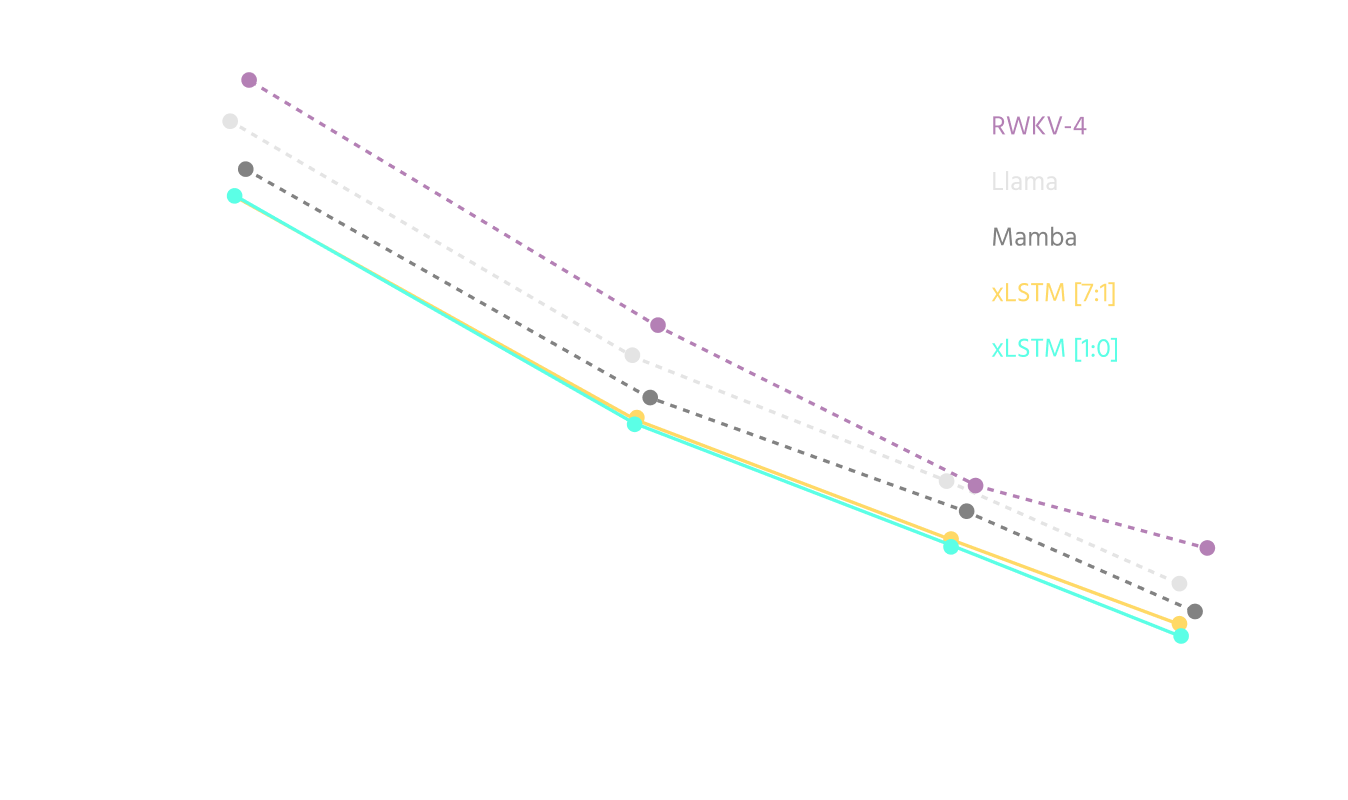

Those modifications are enough to boost xLSTM capabilities to perform favorably when compared to state-of-the-art Transformers and State Space Models, both in performance and scaling. The results reported in our paper indicate that xLSTM models will be a serious competitor to current Large Language Models.

xLSTM is our innovative new building block, i.e., the heart of a new wave of European LLMs, which we are developing in house here at NXAI.